JDMDH Call for Contribution: Special Issue on Computer-Aided Processing of Intertextuality in Ancient Languages

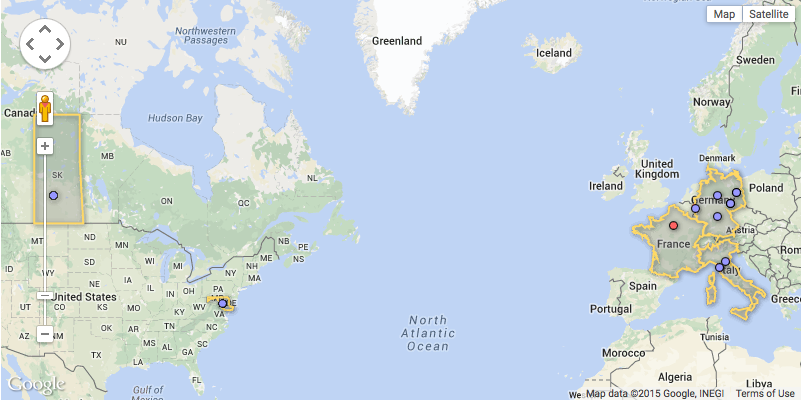

“Europe’s future is digital”. This was the headline of a speech given at the Hannover exhibition in April 2015 by Günther Oettinger, EU-Commissioner for Digital Economy and Society. While businesses and industries have already made major advances in digital ecosystems, the digital transformation of texts stretching over a period of more than two millennia is far from complete. On the one hand, mass digitisation leads to an „information overload“ of digitally available data; on the other, the “information poverty” embodied by the loss of books and the fragmentary state of ancient texts form an incomplete and biased view of our past. In a digital ecosystem, this coexistence of data overload and poverty adds considerable complexity to scholarly research.